Tutorial

How to build a face similarity search app with Redis vector sets

March 25, 202615 minute read

TL;DR:Build a face similarity search app that uses Redis vector sets to find celebrity lookalikes. Upload a photo or take a selfie, generate a face embedding with a Vision Transformer model, and run aVSIMquery to find the nearest matches in a pre-loaded dataset of 1,000 celebrity face vectors — all powered by Redis.

Note: This tutorial uses the code from the following git repository:

#What you'll learn

- How to store face embeddings in a Redis vector set with

VADD - How to run similarity searches against existing elements and new image vectors with

VSIM - How to filter similarity results by metadata attributes

- How Vision Transformer (ViT) models generate image embeddings for face comparison

- How to connect an Express and React app to Redis vector sets

#What you'll build

You'll run a three-service face similarity search app:

- A Python embedding service that converts uploaded face images into 768-dimension vectors using a ViT (Vision Transformer) model fine-tuned on celebrity faces

- An Express server that handles image uploads, calls the embedding service, and queries Redis vector sets with

VSIM - A React frontend that lets you upload a photo or take a selfie, then displays the closest celebrity matches ranked by similarity score

The app ships with a pre-loaded dataset of 1,000 celebrity face embeddings. You select or upload a face, and the app finds which celebrities look most similar using vector search in Redis.

#What is face similarity search?

Face similarity search compares a query face against a database of known faces to find the closest matches. Instead of comparing raw pixel data, the app converts each face into a vector embedding — a compact numerical representation that captures the visual features of the face. Two faces that look alike produce vectors that are close together in the embedding space.

The typical flow is:

- Embed — Convert each face image into a fixed-length vector (768 floats in this app) using a neural network.

- Store — Save the vectors in a data structure optimized for nearest-neighbor search.

- Query — Given a new face vector, find the stored vectors closest to it.

Redis vector sets handle steps 2 and 3 natively. You store embeddings with

VADD and query them with VSIM, which returns the K nearest neighbors ranked by cosine similarity.#Why use Redis vector sets for face similarity?

Redis vector sets give you a fast, schema-free path to nearest-neighbor search without the overhead of a separate vector database or a secondary index.

- Native data type — Vector sets are a first-class Redis data type (Redis 8+), just like strings or sorted sets. No external plugins needed.

- Built-in HNSW index — Every vector set automatically builds an HNSW (Hierarchical Navigable Small World) graph for approximate nearest-neighbor search. You don't create or manage indexes yourself.

- Attribute filtering — Each element can carry a JSON attribute payload.

VSIMsupports inlineFILTERexpressions so you can narrow results by metadata (e.g., name length, country) without a separate query. - Simple API — Two commands cover the core workflow:

VADDto insert,VSIMto search. No schema definitions, no index rebuild steps. - Real-time updates — Add or remove elements at any time. The HNSW graph updates incrementally, so the set is always queryable.

For a deeper introduction to vector sets, see the Getting started with vector sets tutorial.

#How does the app work?

The app has three services that all connect through one Redis instance:

Two search paths exist:

- New element search — The user uploads a photo not in the dataset. The server sends the image to the Python embedding service, gets a 768-dim vector back, and runs

VSIM ... VALUESto find the nearest neighbors. - Existing element search — The user picks a celebrity card already in the dataset. The server runs

VSIM ... ELEusing that element's ID, so Redis compares against the stored vector directly.

#Prerequisites

If you're new to Redis vector sets, start with the Getting started with vector sets tutorial first.

#Step 1. Clone the repo

#Step 2. Run the app with Docker

Start all three services:

The repo includes a pre-built Redis snapshot (

database/redis-data/dump.rdb) containing 1,000 celebrity face embeddings. Redis loads this automatically on startup — no manual import needed.Once all containers are healthy:

- App:

http://localhost:3000 - Embedding service:

http://localhost:8009 - Redis:

redis://localhost:6379

Note: The first startup takes a few minutes. The Python embedding service downloads the ViT model weights (~350 MB) on first launch. Docker caches the weights in a volume, so subsequent starts are fast.

You can verify the dataset loaded correctly:

You should see

1000, confirming that all celebrity embeddings are in the vector set.Open

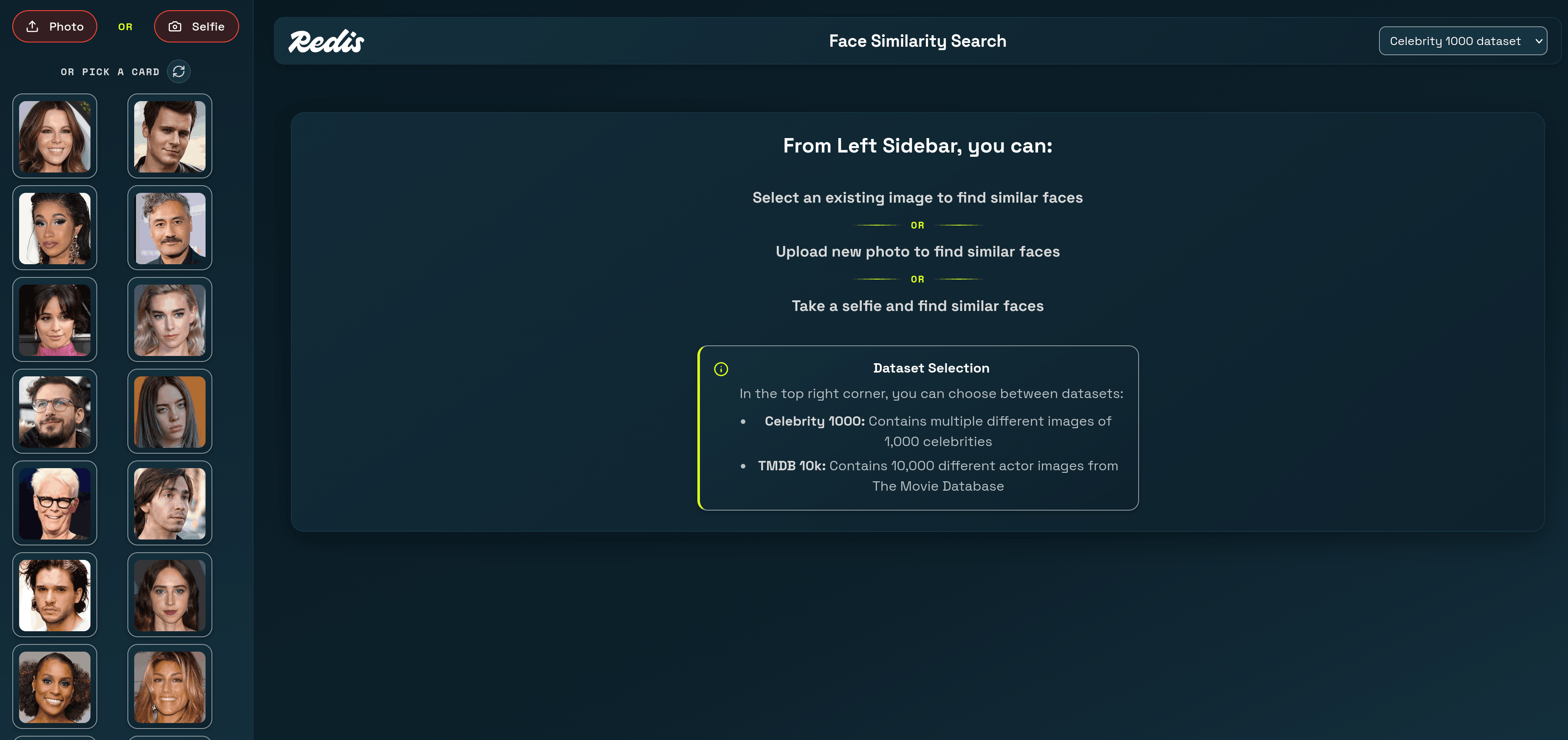

http://localhost:3000 in your browser. You'll see a grid of random celebrity face cards on the left. Click any card to find similar-looking celebrities, or upload your own photo to find your celebrity lookalike.#How does the app generate face embeddings?

The app uses two embedding strategies depending on the dataset. For the celebrity dataset, a Python microservice runs a Vision Transformer (ViT) model fine-tuned on celebrity face classification.

The embedding service is a FastAPI app that accepts an image upload and returns a 768-dimension vector:

The key steps:

- Preprocess — The

ViTImageProcessorresizes and normalizes the image for the model. - Forward pass — The model produces hidden states for each image patch. The CLS token (index 0 of the last hidden layer) captures a global summary of the face.

- Normalize — L2 normalization ensures that cosine similarity and dot product give the same ranking, which is what Redis vector sets use internally.

The result is a 768-float vector where faces that look similar cluster near each other in the embedding space.

#How does the app store face data in Redis?

Each celebrity is stored as an element in a Redis vector set using the

VADD command. The pre-built dataset file contains commands like:Breaking down the command:

vset:celeb— The vector set key name.VALUES 768— Each embedding has 768 dimensions.0.0234 -0.0891 0.0412 ...— The 768 floating-point values of the face embedding.e1— A unique element ID for this celebrity.SETATTR '{...}'— JSON metadata attached to the element. This app stores the celebrity name (label), the image file path (imagePath), and the name's character count (charCount).

The vector set automatically builds an HNSW index as elements are added. There's no separate

CREATE INDEX step — the set is queryable immediately after the first VADD.You can inspect any element's metadata with

VGETATTR:And check the vector set's structure with

VINFO:This confirms the set holds 1,000 elements with 768-dimension vectors and uses int8 quantization for compact storage.

#How does the app search for similar faces?

The app uses two forms of the

VSIM command depending on whether the query face is already in the dataset.#Searching with an existing element

When a user clicks a celebrity card from the sidebar, the server runs

VSIM with the ELE keyword. This tells Redis to use the stored vector for that element as the query:The Express server builds this query in

existing-element-search/index.ts:Redis returns a flat array of

[elementId, score, attributes, ...] triples. The server parses these into structured results:The first result is always the query element itself with a perfect score of 1. The server filters it out before sending results to the UI.

#Searching with a new image

When a user uploads a photo or takes a selfie, the face isn't in the dataset. The server:

- Uploads the image to disk.

- Sends it to the Python embedding service to get a 768-dim vector.

- Runs

VSIMwith theVALUESkeyword, passing the vector directly:

The Express server builds this query in

new-element-search/index.ts:The

getCelebEmbedding function sends the image to the Python service:Both search paths return the same response shape — a ranked list of celebrity matches with similarity scores and metadata — so the frontend renders them identically.

#How does the app filter results by attributes?

The

VSIM command supports a FILTER clause that narrows results based on element attributes. This app uses it to let users filter by name length (character count).For example, to find similar faces where the celebrity name is at least 15 characters long:

The filter expression

.charCount>=15 references the charCount field in the element's JSON attributes. Redis evaluates the filter against each candidate before including it in the results.The frontend sends the filter as part of the search request:

This supports both exact string matches (

.country=="UNITED_STATES") and numeric comparisons (.charCount>=15). Multiple filters combine with and.Note: Filter expressions use JSONPath-like dot notation to reference attribute fields. The attribute must exist in the element'sSETATTRJSON for the filter to match.

#How does the app display random sample images?

The sidebar shows a random selection of celebrity cards using two vector set commands. First,

VRANDMEMBER picks random element IDs:Then

VGETATTR retrieves the JSON attributes for each element:The server combines these into image cards:

Each click of the refresh button fetches a new random set, so users see different celebrities each time.

#What Redis vector set commands does this app use?

Here's a summary of every vector set command the app relies on:

| Command | Purpose | Example |

|---|---|---|

VADD | Store a face embedding with metadata | VADD 'vset:celeb' VALUES 768 ... 'e1' SETATTR '{...}' |

VSIM | Find similar faces by element or vector | VSIM 'vset:celeb' ELE 'e42' WITHSCORES COUNT 10 |

VRANDMEMBER | Pick random elements for the sidebar | VRANDMEMBER 'vset:celeb' 100 |

VGETATTR | Retrieve an element's JSON attributes | VGETATTR 'vset:celeb' 'e42' |

VCARD | Count elements in the set | VCARD 'vset:celeb' |

VINFO | Inspect set structure and config | VINFO 'vset:celeb' |

#Ready to build face similarity search with Redis?

You've learned how to:

- Store face embeddings in a Redis vector set with

VADDand JSON attributes. - Search for similar faces using

VSIMwith both existing elements and new image vectors. - Filter results by metadata attributes like name length.

- Generate face embeddings with a ViT vision model and connect them to Redis through an Express API.

Vector sets make similarity search straightforward — two commands (

VADD and VSIM) handle the entire storage and retrieval workflow, with built-in HNSW indexing and attribute filtering.#Next steps

- Explore the full vector sets data type docs.

- Try the Getting started with vector sets tutorial for a text-based similarity example.

- Read the

VADDandVSIMcommand references for all available options. - Learn about vector search with Redis Search for more complex queries combining vector similarity with full-text search.

- Build a semantic text search app using Redis vectors with text embeddings.