Redis as a vector database quick start guide

Understand how to use Redis as a vector database

This quick start guide helps you to:

- Understand what a vector database is

- Create a Redis vector database

- Create vector embeddings and store vectors

- Query data and perform a vector search

This guide uses RedisVL, which is a Python client library for Redis that is highly specialized for vector processing. You may also be interested in the vector query examples for our other client libraries:

Understand vector databases

Data is often unstructured, which means that it isn't described by a well-defined schema. Examples of unstructured data include text passages, images, videos, or audio. One approach to storing and searching through unstructured data is to use vector embeddings.

What are vectors? In machine learning and AI, vectors are sequences of numbers that represent data. They are the inputs and outputs of models, encapsulating underlying information in a numerical form. Vectors transform unstructured data, such as text, images, videos, and audio, into a format that machine learning models can process.

- Why are they important? Vectors capture complex patterns and semantic meanings inherent in data, making them powerful tools for a variety of applications. They allow machine learning models to understand and manipulate unstructured data more effectively.

- Enhancing traditional search. Traditional keyword or lexical search relies on exact matches of words or phrases, which can be limiting. In contrast, vector search, or semantic search, leverages the rich information captured in vector embeddings. By mapping data into a vector space, similar items are positioned near each other based on their meaning. This approach allows for more accurate and meaningful search results, as it considers the context and semantic content of the query rather than just the exact words used.

Create a Redis vector database

You can use Redis Open Source as a vector database. It allows you to:

- Store vectors and the associated metadata within hashes or JSON documents

- Create and configure secondary indices for search

- Perform vector searches

- Update vectors and metadata

- Delete and cleanup

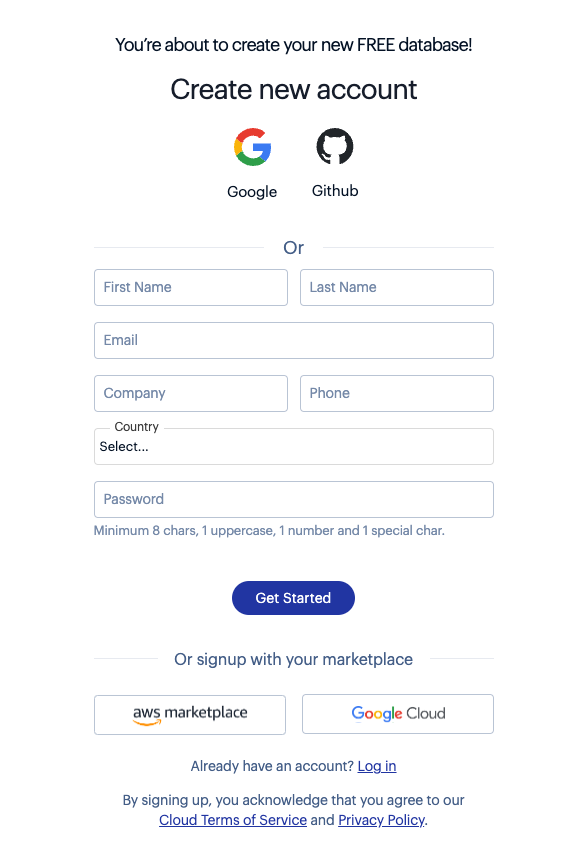

The easiest way to get started is to use Redis Cloud:

-

Create a free account.

-

Follow the instructions to create a free database.

This free Redis Cloud database comes out of the box with all the Redis Open Source features.

You can alternatively use the installation guides to install Redis on your local machine.

Install the required Python packages

Create a Python virtual environment and install the following dependencies using pip:

redis: You can find further details about theredis-pyclient library in the clients section of this documentation site.pandas: Pandas is a data analysis library.sentence-transformers: You will use the SentenceTransformers framework to generate embeddings on full text.tabulate:pandasusestabulateto render Markdown.

You will also need the following imports in your Python code:

"""

Code samples for vector database quickstart pages:

https://redis.io/docs/latest/develop/get-started/vector-database/

"""

import json

import time

import numpy as np

import pandas as pd

import requests

import redis

from redis.commands.search.field import (

NumericField,

TagField,

TextField,

VectorField,

)

from redis.commands.search.index_definition import IndexDefinition, IndexType

from redis.commands.search.query import Query

from sentence_transformers import SentenceTransformer

URL = ("https://raw.githubusercontent.com/bsbodden/redis_vss_getting_started"

"/main/data/bikes.json"

)

response = requests.get(URL, timeout=10)

bikes = response.json()

json.dumps(bikes[0], indent=2)

client = redis.Redis(host="localhost", port=6379, decode_responses=True)

res = client.ping()

# >>> True

pipeline = client.pipeline()

for i, bike in enumerate(bikes, start=1):

redis_key = f"bikes:{i:03}"

pipeline.json().set(redis_key, "$", bike)

res = pipeline.execute()

# >>> [True, True, True, True, True, True, True, True, True, True, True]

res = client.json().get("bikes:010", "$.model")

# >>> ['Summit']

keys = sorted(client.keys("bikes:*"))

# >>> ['bikes:001', 'bikes:002', ..., 'bikes:011']

descriptions = client.json().mget(keys, "$.description")

descriptions = [item for sublist in descriptions for item in sublist]

embedder = SentenceTransformer("msmarco-distilbert-base-v4")

embeddings = embedder.encode(descriptions).astype(np.float32).tolist()

VECTOR_DIMENSION = len(embeddings[0])

# >>> 768

pipeline = client.pipeline()

for key, embedding in zip(keys, embeddings):

pipeline.json().set(key, "$.description_embeddings", embedding)

pipeline.execute()

# >>> [True, True, True, True, True, True, True, True, True, True, True]

res = client.json().get("bikes:010")

# >>>

# {

# "model": "Summit",

# "brand": "nHill",

# "price": 1200,

# "type": "Mountain Bike",

# "specs": {

# "material": "alloy",

# "weight": "11.3"

# },

# "description": "This budget mountain bike from nHill performs well..."

# "description_embeddings": [

# -0.538114607334137,

# -0.49465855956077576,

# -0.025176964700222015,

# ...

# ]

# }

schema = (

TextField("$.model", no_stem=True, as_name="model"),

TextField("$.brand", no_stem=True, as_name="brand"),

NumericField("$.price", as_name="price"),

TagField("$.type", as_name="type"),

TextField("$.description", as_name="description"),

VectorField(

"$.description_embeddings",

"FLAT",

{

"TYPE": "FLOAT32",

"DIM": VECTOR_DIMENSION,

"DISTANCE_METRIC": "COSINE",

},

as_name="vector",

),

)

definition = IndexDefinition(prefix=["bikes:"], index_type=IndexType.JSON)

res = client.ft("idx:bikes_vss").create_index(fields=schema, definition=definition)

# >>> 'OK'

info = client.ft("idx:bikes_vss").info()

num_docs = info["num_docs"]

indexing_failures = info["hash_indexing_failures"]

# print(f"{num_docs} documents indexed with {indexing_failures} failures")

# >>> 11 documents indexed with 0 failures

query = Query("@brand:Peaknetic")

res = client.ft("idx:bikes_vss").search(query).docs

# print(res)

# >>> [

# Document {

# 'id': 'bikes:008',

# 'payload': None,

# 'brand': 'Peaknetic',

# 'model': 'Soothe Electric bike',

# 'price': '1950', 'description_embeddings': ...

query = Query("@brand:Peaknetic").return_fields("id", "brand", "model", "price")

res = client.ft("idx:bikes_vss").search(query).docs

# print(res)

# >>> [

# Document {

# 'id': 'bikes:008',

# 'payload': None,

# 'brand': 'Peaknetic',

# 'model': 'Soothe Electric bike',

# 'price': '1950'

# },

# Document {

# 'id': 'bikes:009',

# 'payload': None,

# 'brand': 'Peaknetic',

# 'model': 'Secto',

# 'price': '430'

# }

# ]

query = Query("@brand:Peaknetic @price:[0 1000]").return_fields(

"id", "brand", "model", "price"

)

res = client.ft("idx:bikes_vss").search(query).docs

# print(res)

# >>> [

# Document {

# 'id': 'bikes:009',

# 'payload': None,

# 'brand': 'Peaknetic',

# 'model': 'Secto',

# 'price': '430'

# }

# ]

queries = [

"Bike for small kids",

"Best Mountain bikes for kids",

"Cheap Mountain bike for kids",

"Female specific mountain bike",

"Road bike for beginners",

"Commuter bike for people over 60",

"Comfortable commuter bike",

"Good bike for college students",

"Mountain bike for beginners",

"Vintage bike",

"Comfortable city bike",

]

encoded_queries = embedder.encode(queries)

len(encoded_queries)

# >>> 11

def create_query_table(query, queries, encoded_queries, extra_params=None):

"""

Creates a query table.

"""

results_list = []

for i, encoded_query in enumerate(encoded_queries):

result_docs = (

client.ft("idx:bikes_vss")

.search(

query,

{"query_vector": np.array(encoded_query, dtype=np.float32).tobytes()}

| (extra_params if extra_params else {}),

)

.docs

)

for doc in result_docs:

vector_score = round(1 - float(doc.vector_score), 2)

results_list.append(

{

"query": queries[i],

"score": vector_score,

"id": doc.id,

"brand": doc.brand,

"model": doc.model,

"description": doc.description,

}

)

# Optional: convert the table to Markdown using Pandas

queries_table = pd.DataFrame(results_list)

queries_table.sort_values(

by=["query", "score"], ascending=[True, False], inplace=True

)

queries_table["query"] = queries_table.groupby("query")["query"].transform(

lambda x: [x.iloc[0]] + [""] * (len(x) - 1)

)

queries_table["description"] = queries_table["description"].apply(

lambda x: (x[:497] + "...") if len(x) > 500 else x

)

return queries_table.to_markdown(index=False)

query = (

Query("(*)=>[KNN 3 @vector $query_vector AS vector_score]")

.sort_by("vector_score")

.return_fields("vector_score", "id", "brand", "model", "description")

.dialect(2)

)

table = create_query_table(query, queries, encoded_queries)

print(table)

# >>> | Best Mountain bikes for kids | 0.54 | bikes:003...

hybrid_query = (

Query("(@brand:Peaknetic)=>[KNN 3 @vector $query_vector AS vector_score]")

.sort_by("vector_score")

.return_fields("vector_score", "id", "brand", "model", "description")

.dialect(2)

)

table = create_query_table(hybrid_query, queries, encoded_queries)

print(table)

# >>> | Best Mountain bikes for kids | 0.3 | bikes:008...

range_query = (

Query(

"@vector:[VECTOR_RANGE $range $query_vector]=>"

"{$YIELD_DISTANCE_AS: vector_score}"

)

.sort_by("vector_score")

.return_fields("vector_score", "id", "brand", "model", "description")

.paging(0, 4)

.dialect(2)

)

table = create_query_table(

range_query, queries[:1],

encoded_queries[:1],

{"range": 0.55}

)

print(table)

# >>> | Bike for small kids | 0.52 | bikes:001 | Velorim |...

Connect

Connect to Redis. By default, Redis returns binary responses. To decode them, you pass the decode_responses parameter set to True:

"""

Code samples for vector database quickstart pages:

https://redis.io/docs/latest/develop/get-started/vector-database/

"""

import json

import time

import numpy as np

import pandas as pd

import requests

import redis

from redis.commands.search.field import (

NumericField,

TagField,

TextField,

VectorField,

)

from redis.commands.search.index_definition import IndexDefinition, IndexType

from redis.commands.search.query import Query

from sentence_transformers import SentenceTransformer

URL = ("https://raw.githubusercontent.com/bsbodden/redis_vss_getting_started"

"/main/data/bikes.json"

)

response = requests.get(URL, timeout=10)

bikes = response.json()

json.dumps(bikes[0], indent=2)

client = redis.Redis(host="localhost", port=6379, decode_responses=True)

res = client.ping()

# >>> True

pipeline = client.pipeline()

for i, bike in enumerate(bikes, start=1):

redis_key = f"bikes:{i:03}"

pipeline.json().set(redis_key, "$", bike)

res = pipeline.execute()

# >>> [True, True, True, True, True, True, True, True, True, True, True]

res = client.json().get("bikes:010", "$.model")

# >>> ['Summit']

keys = sorted(client.keys("bikes:*"))

# >>> ['bikes:001', 'bikes:002', ..., 'bikes:011']

descriptions = client.json().mget(keys, "$.description")

descriptions = [item for sublist in descriptions for item in sublist]

embedder = SentenceTransformer("msmarco-distilbert-base-v4")

embeddings = embedder.encode(descriptions).astype(np.float32).tolist()

VECTOR_DIMENSION = len(embeddings[0])

# >>> 768

pipeline = client.pipeline()

for key, embedding in zip(keys, embeddings):

pipeline.json().set(key, "$.description_embeddings", embedding)

pipeline.execute()

# >>> [True, True, True, True, True, True, True, True, True, True, True]

res = client.json().get("bikes:010")

# >>>

# {

# "model": "Summit",

# "brand": "nHill",

# "price": 1200,

# "type": "Mountain Bike",

# "specs": {

# "material": "alloy",

# "weight": "11.3"

# },

# "description": "This budget mountain bike from nHill performs well..."

# "description_embeddings": [

# -0.538114607334137,

# -0.49465855956077576,

# -0.025176964700222015,

# ...

# ]

# }

schema = (

TextField("$.model", no_stem=True, as_name="model"),

TextField("$.brand", no_stem=True, as_name="brand"),

NumericField("$.price", as_name="price"),

TagField("$.type", as_name="type"),

TextField("$.description", as_name="description"),

VectorField(

"$.description_embeddings",

"FLAT",

{

"TYPE": "FLOAT32",

"DIM": VECTOR_DIMENSION,

"DISTANCE_METRIC": "COSINE",

},

as_name="vector",

),

)

definition = IndexDefinition(prefix=["bikes:"], index_type=IndexType.JSON)

res = client.ft("idx:bikes_vss").create_index(fields=schema, definition=definition)

# >>> 'OK'

info = client.ft("idx:bikes_vss").info()

num_docs = info["num_docs"]

indexing_failures = info["hash_indexing_failures"]

# print(f"{num_docs} documents indexed with {indexing_failures} failures")

# >>> 11 documents indexed with 0 failures

query = Query("@brand:Peaknetic")

res = client.ft("idx:bikes_vss").search(query).docs

# print(res)

# >>> [

# Document {

# 'id': 'bikes:008',

# 'payload': None,

# 'brand': 'Peaknetic',

# 'model': 'Soothe Electric bike',

# 'price': '1950', 'description_embeddings': ...

query = Query("@brand:Peaknetic").return_fields("id", "brand", "model", "price")

res = client.ft("idx:bikes_vss").search(query).docs

# print(res)

# >>> [

# Document {

# 'id': 'bikes:008',

# 'payload': None,

# 'brand': 'Peaknetic',

# 'model': 'Soothe Electric bike',

# 'price': '1950'

# },

# Document {

# 'id': 'bikes:009',

# 'payload': None,

# 'brand': 'Peaknetic',

# 'model': 'Secto',

# 'price': '430'

# }

# ]

query = Query("@brand:Peaknetic @price:[0 1000]").return_fields(

"id", "brand", "model", "price"

)

res = client.ft("idx:bikes_vss").search(query).docs

# print(res)

# >>> [

# Document {

# 'id': 'bikes:009',

# 'payload': None,

# 'brand': 'Peaknetic',

# 'model': 'Secto',

# 'price': '430'

# }

# ]

queries = [

"Bike for small kids",

"Best Mountain bikes for kids",

"Cheap Mountain bike for kids",

"Female specific mountain bike",

"Road bike for beginners",

"Commuter bike for people over 60",

"Comfortable commuter bike",

"Good bike for college students",

"Mountain bike for beginners",

"Vintage bike",

"Comfortable city bike",

]

encoded_queries = embedder.encode(queries)

len(encoded_queries)

# >>> 11

def create_query_table(query, queries, encoded_queries, extra_params=None):

"""

Creates a query table.

"""

results_list = []

for i, encoded_query in enumerate(encoded_queries):

result_docs = (

client.ft("idx:bikes_vss")

.search(

query,

{"query_vector": np.array(encoded_query, dtype=np.float32).tobytes()}

| (extra_params if extra_params else {}),

)

.docs

)

for doc in result_docs:

vector_score = round(1 - float(doc.vector_score), 2)

results_list.append(

{

"query": queries[i],

"score": vector_score,

"id": doc.id,

"brand": doc.brand,

"model": doc.model,

"description": doc.description,

}

)

# Optional: convert the table to Markdown using Pandas

queries_table = pd.DataFrame(results_list)

queries_table.sort_values(

by=["query", "score"], ascending=[True, False], inplace=True

)

queries_table["query"] = queries_table.groupby("query")["query"].transform(

lambda x: [x.iloc[0]] + [""] * (len(x) - 1)

)

queries_table["description"] = queries_table["description"].apply(

lambda x: (x[:497] + "...") if len(x) > 500 else x

)

return queries_table.to_markdown(index=False)

query = (

Query("(*)=>[KNN 3 @vector $query_vector AS vector_score]")

.sort_by("vector_score")

.return_fields("vector_score", "id", "brand", "model", "description")

.dialect(2)

)

table = create_query_table(query, queries, encoded_queries)

print(table)

# >>> | Best Mountain bikes for kids | 0.54 | bikes:003...

hybrid_query = (

Query("(@brand:Peaknetic)=>[KNN 3 @vector $query_vector AS vector_score]")

.sort_by("vector_score")

.return_fields("vector_score", "id", "brand", "model", "description")

.dialect(2)

)

table = create_query_table(hybrid_query, queries, encoded_queries)

print(table)

# >>> | Best Mountain bikes for kids | 0.3 | bikes:008...

range_query = (

Query(

"@vector:[VECTOR_RANGE $range $query_vector]=>"

"{$YIELD_DISTANCE_AS: vector_score}"

)

.sort_by("vector_score")

.return_fields("vector_score", "id", "brand", "model", "description")

.paging(0, 4)

.dialect(2)

)

table = create_query_table(

range_query, queries[:1],

encoded_queries[:1],

{"range": 0.55}

)

print(table)

# >>> | Bike for small kids | 0.52 | bikes:001 | Velorim |...

us-east-1 and listens on port 16379: redis-16379.c283.us-east-1-4.ec2.cloud.redislabs.com:16379. The connection string has the format host:port. You must also copy and paste the username and password of your Cloud database. The line of code for connecting with the default user changes then to client = redis.Redis(host="redis-16379.c283.us-east-1-4.ec2.cloud.redislabs.com", port=16379, password="your_password_here", decode_responses=True).Prepare the demo dataset

This quick start guide also uses the bikes dataset. Here is an example document from it:

{

"model": "Jigger",

"brand": "Velorim",

"price": 270,

"type": "Kids bikes",

"specs": {

"material": "aluminium",

"weight": "10"

},

"description": "Small and powerful, the Jigger is the best ride for the smallest of tikes! ..."

}

The description field contains free-form text descriptions of bikes and will be used to create vector embeddings.

1. Fetch the demo data

You need to first fetch the demo dataset as a JSON array:

"""

Code samples for vector database quickstart pages:

https://redis.io/docs/latest/develop/get-started/vector-database/

"""

import json

import time

import numpy as np

import pandas as pd

import requests

import redis

from redis.commands.search.field import (

NumericField,

TagField,

TextField,

VectorField,

)

from redis.commands.search.index_definition import IndexDefinition, IndexType

from redis.commands.search.query import Query

from sentence_transformers import SentenceTransformer

URL = ("https://raw.githubusercontent.com/bsbodden/redis_vss_getting_started"

"/main/data/bikes.json"

)

response = requests.get(URL, timeout=10)

bikes = response.json()

json.dumps(bikes[0], indent=2)

client = redis.Redis(host="localhost", port=6379, decode_responses=True)

res = client.ping()

# >>> True

pipeline = client.pipeline()

for i, bike in enumerate(bikes, start=1):

redis_key = f"bikes:{i:03}"

pipeline.json().set(redis_key, "$", bike)

res = pipeline.execute()

# >>> [True, True, True, True, True, True, True, True, True, True, True]

res = client.json().get("bikes:010", "$.model")

# >>> ['Summit']

keys = sorted(client.keys("bikes:*"))

# >>> ['bikes:001', 'bikes:002', ..., 'bikes:011']

descriptions = client.json().mget(keys, "$.description")

descriptions = [item for sublist in descriptions for item in sublist]

embedder = SentenceTransformer("msmarco-distilbert-base-v4")

embeddings = embedder.encode(descriptions).astype(np.float32).tolist()

VECTOR_DIMENSION = len(embeddings[0])

# >>> 768

pipeline = client.pipeline()

for key, embedding in zip(keys, embeddings):

pipeline.json().set(key, "$.description_embeddings", embedding)

pipeline.execute()

# >>> [True, True, True, True, True, True, True, True, True, True, True]

res = client.json().get("bikes:010")

# >>>

# {

# "model": "Summit",

# "brand": "nHill",

# "price": 1200,

# "type": "Mountain Bike",

# "specs": {

# "material": "alloy",

# "weight": "11.3"

# },

# "description": "This budget mountain bike from nHill performs well..."

# "description_embeddings": [

# -0.538114607334137,

# -0.49465855956077576,

# -0.025176964700222015,

# ...

# ]

# }

schema = (

TextField("$.model", no_stem=True, as_name="model"),

TextField("$.brand", no_stem=True, as_name="brand"),

NumericField("$.price", as_name="price"),

TagField("$.type", as_name="type"),

TextField("$.description", as_name="description"),

VectorField(

"$.description_embeddings",

"FLAT",

{

"TYPE": "FLOAT32",

"DIM": VECTOR_DIMENSION,

"DISTANCE_METRIC": "COSINE",

},

as_name="vector",

),

)

definition = IndexDefinition(prefix=["bikes:"], index_type=IndexType.JSON)

res = client.ft("idx:bikes_vss").create_index(fields=schema, definition=definition)

# >>> 'OK'

info = client.ft("idx:bikes_vss").info()

num_docs = info["num_docs"]

indexing_failures = info["hash_indexing_failures"]

# print(f"{num_docs} documents indexed with {indexing_failures} failures")

# >>> 11 documents indexed with 0 failures

query = Query("@brand:Peaknetic")

res = client.ft("idx:bikes_vss").search(query).docs

# print(res)

# >>> [

# Document {

# 'id': 'bikes:008',

# 'payload': None,

# 'brand': 'Peaknetic',

# 'model': 'Soothe Electric bike',

# 'price': '1950', 'description_embeddings': ...

query = Query("@brand:Peaknetic").return_fields("id", "brand", "model", "price")

res = client.ft("idx:bikes_vss").search(query).docs

# print(res)

# >>> [

# Document {

# 'id': 'bikes:008',

# 'payload': None,

# 'brand': 'Peaknetic',

# 'model': 'Soothe Electric bike',

# 'price': '1950'

# },

# Document {

# 'id': 'bikes:009',

# 'payload': None,

# 'brand': 'Peaknetic',

# 'model': 'Secto',

# 'price': '430'

# }

# ]

query = Query("@brand:Peaknetic @price:[0 1000]").return_fields(

"id", "brand", "model", "price"

)

res = client.ft("idx:bikes_vss").search(query).docs

# print(res)

# >>> [

# Document {

# 'id': 'bikes:009',

# 'payload': None,

# 'brand': 'Peaknetic',

# 'model': 'Secto',

# 'price': '430'

# }

# ]

queries = [

"Bike for small kids",

"Best Mountain bikes for kids",

"Cheap Mountain bike for kids",

"Female specific mountain bike",

"Road bike for beginners",

"Commuter bike for people over 60",

"Comfortable commuter bike",

"Good bike for college students",

"Mountain bike for beginners",

"Vintage bike",

"Comfortable city bike",

]

encoded_queries = embedder.encode(queries)

len(encoded_queries)

# >>> 11

def create_query_table(query, queries, encoded_queries, extra_params=None):

"""

Creates a query table.

"""

results_list = []

for i, encoded_query in enumerate(encoded_queries):

result_docs = (

client.ft("idx:bikes_vss")

.search(

query,

{"query_vector": np.array(encoded_query, dtype=np.float32).tobytes()}

| (extra_params if extra_params else {}),

)

.docs

)

for doc in result_docs:

vector_score = round(1 - float(doc.vector_score), 2)

results_list.append(

{

"query": queries[i],

"score": vector_score,

"id": doc.id,

"brand": doc.brand,

"model": doc.model,

"description": doc.description,

}

)

# Optional: convert the table to Markdown using Pandas

queries_table = pd.DataFrame(results_list)

queries_table.sort_values(

by=["query", "score"], ascending=[True, False], inplace=True

)

queries_table["query"] = queries_table.groupby("query")["query"].transform(

lambda x: [x.iloc[0]] + [""] * (len(x) - 1)

)

queries_table["description"] = queries_table["description"].apply(

lambda x: (x[:497] + "...") if len(x) > 500 else x

)

return queries_table.to_markdown(index=False)

query = (

Query("(*)=>[KNN 3 @vector $query_vector AS vector_score]")

.sort_by("vector_score")

.return_fields("vector_score", "id", "brand", "model", "description")

.dialect(2)

)

table = create_query_table(query, queries, encoded_queries)

print(table)

# >>> | Best Mountain bikes for kids | 0.54 | bikes:003...

hybrid_query = (

Query("(@brand:Peaknetic)=>[KNN 3 @vector $query_vector AS vector_score]")

.sort_by("vector_score")

.return_fields("vector_score", "id", "brand", "model", "description")

.dialect(2)

)

table = create_query_table(hybrid_query, queries, encoded_queries)

print(table)

# >>> | Best Mountain bikes for kids | 0.3 | bikes:008...

range_query = (

Query(

"@vector:[VECTOR_RANGE $range $query_vector]=>"

"{$YIELD_DISTANCE_AS: vector_score}"

)

.sort_by("vector_score")

.return_fields("vector_score", "id", "brand", "model", "description")

.paging(0, 4)

.dialect(2)

)

table = create_query_table(

range_query, queries[:1],

encoded_queries[:1],

{"range": 0.55}

)

print(table)

# >>> | Bike for small kids | 0.52 | bikes:001 | Velorim |...

Inspect the structure of one of the bike JSON documents:

"""

Code samples for vector database quickstart pages:

https://redis.io/docs/latest/develop/get-started/vector-database/

"""

import json

import time

import numpy as np

import pandas as pd

import requests

import redis

from redis.commands.search.field import (

NumericField,

TagField,

TextField,

VectorField,

)

from redis.commands.search.index_definition import IndexDefinition, IndexType

from redis.commands.search.query import Query

from sentence_transformers import SentenceTransformer

URL = ("https://raw.githubusercontent.com/bsbodden/redis_vss_getting_started"

"/main/data/bikes.json"

)

response = requests.get(URL, timeout=10)

bikes = response.json()

json.dumps(bikes[0], indent=2)

client = redis.Redis(host="localhost", port=6379, decode_responses=True)

res = client.ping()

# >>> True

pipeline = client.pipeline()

for i, bike in enumerate(bikes, start=1):

redis_key = f"bikes:{i:03}"

pipeline.json().set(redis_key, "$", bike)

res = pipeline.execute()

# >>> [True, True, True, True, True, True, True, True, True, True, True]

res = client.json().get("bikes:010", "$.model")

# >>> ['Summit']

keys = sorted(client.keys("bikes:*"))

# >>> ['bikes:001', 'bikes:002', ..., 'bikes:011']

descriptions = client.json().mget(keys, "$.description")

descriptions = [item for sublist in descriptions for item in sublist]

embedder = SentenceTransformer("msmarco-distilbert-base-v4")

embeddings = embedder.encode(descriptions).astype(np.float32).tolist()

VECTOR_DIMENSION = len(embeddings[0])

# >>> 768

pipeline = client.pipeline()

for key, embedding in zip(keys, embeddings):

pipeline.json().set(key, "$.description_embeddings", embedding)

pipeline.execute()

# >>> [True, True, True, True, True, True, True, True, True, True, True]

res = client.json().get("bikes:010")

# >>>

# {

# "model": "Summit",

# "brand": "nHill",

# "price": 1200,

# "type": "Mountain Bike",

# "specs": {

# "material": "alloy",

# "weight": "11.3"

# },

# "description": "This budget mountain bike from nHill performs well..."

# "description_embeddings": [

# -0.538114607334137,

# -0.49465855956077576,

# -0.025176964700222015,

# ...

# ]

# }

schema = (

TextField("$.model", no_stem=True, as_name="model"),

TextField("$.brand", no_stem=True, as_name="brand"),

NumericField("$.price", as_name="price"),

TagField("$.type", as_name="type"),

TextField("$.description", as_name="description"),

VectorField(

"$.description_embeddings",

"FLAT",

{

"TYPE": "FLOAT32",

"DIM": VECTOR_DIMENSION,

"DISTANCE_METRIC": "COSINE",

},

as_name="vector",

),

)

definition = IndexDefinition(prefix=["bikes:"], index_type=IndexType.JSON)

res = client.ft("idx:bikes_vss").create_index(fields=schema, definition=definition)

# >>> 'OK'

info = client.ft("idx:bikes_vss").info()

num_docs = info["num_docs"]

indexing_failures = info["hash_indexing_failures"]

# print(f"{num_docs} documents indexed with {indexing_failures} failures")

# >>> 11 documents indexed with 0 failures

query = Query("@brand:Peaknetic")

res = client.ft("idx:bikes_vss").search(query).docs

# print(res)

# >>> [

# Document {

# 'id': 'bikes:008',

# 'payload': None,

# 'brand': 'Peaknetic',

# 'model': 'Soothe Electric bike',

# 'price': '1950', 'description_embeddings': ...

query = Query("@brand:Peaknetic").return_fields("id", "brand", "model", "price")

res = client.ft("idx:bikes_vss").search(query).docs

# print(res)

# >>> [

# Document {

# 'id': 'bikes:008',

# 'payload': None,

# 'brand': 'Peaknetic',

# 'model': 'Soothe Electric bike',

# 'price': '1950'

# },

# Document {

# 'id': 'bikes:009',

# 'payload': None,

# 'brand': 'Peaknetic',

# 'model': 'Secto',

# 'price': '430'

# }

# ]

query = Query("@brand:Peaknetic @price:[0 1000]").return_fields(

"id", "brand", "model", "price"

)

res = client.ft("idx:bikes_vss").search(query).docs

# print(res)

# >>> [

# Document {

# 'id': 'bikes:009',

# 'payload': None,

# 'brand': 'Peaknetic',

# 'model': 'Secto',

# 'price': '430'

# }

# ]

queries = [

"Bike for small kids",

"Best Mountain bikes for kids",

"Cheap Mountain bike for kids",

"Female specific mountain bike",

"Road bike for beginners",

"Commuter bike for people over 60",

"Comfortable commuter bike",

"Good bike for college students",

"Mountain bike for beginners",

"Vintage bike",

"Comfortable city bike",

]

encoded_queries = embedder.encode(queries)

len(encoded_queries)

# >>> 11

def create_query_table(query, queries, encoded_queries, extra_params=None):

"""

Creates a query table.

"""

results_list = []

for i, encoded_query in enumerate(encoded_queries):

result_docs = (

client.ft("idx:bikes_vss")

.search(

query,

{"query_vector": np.array(encoded_query, dtype=np.float32).tobytes()}

| (extra_params if extra_params else {}),

)

.docs

)

for doc in result_docs:

vector_score = round(1 - float(doc.vector_score), 2)

results_list.append(

{

"query": queries[i],

"score": vector_score,

"id": doc.id,

"brand": doc.brand,

"model": doc.model,

"description": doc.description,

}

)

# Optional: convert the table to Markdown using Pandas

queries_table = pd.DataFrame(results_list)

queries_table.sort_values(

by=["query", "score"], ascending=[True, False], inplace=True

)

queries_table["query"] = queries_table.groupby("query")["query"].transform(

lambda x: [x.iloc[0]] + [""] * (len(x) - 1)

)

queries_table["description"] = queries_table["description"].apply(

lambda x: (x[:497] + "...") if len(x) > 500 else x

)

return queries_table.to_markdown(index=False)

query = (

Query("(*)=>[KNN 3 @vector $query_vector AS vector_score]")

.sort_by("vector_score")

.return_fields("vector_score", "id", "brand", "model", "description")

.dialect(2)

)

table = create_query_table(query, queries, encoded_queries)

print(table)

# >>> | Best Mountain bikes for kids | 0.54 | bikes:003...

hybrid_query = (

Query("(@brand:Peaknetic)=>[KNN 3 @vector $query_vector AS vector_score]")

.sort_by("vector_score")

.return_fields("vector_score", "id", "brand", "model", "description")

.dialect(2)

)

table = create_query_table(hybrid_query, queries, encoded_queries)

print(table)

# >>> | Best Mountain bikes for kids | 0.3 | bikes:008...

range_query = (

Query(

"@vector:[VECTOR_RANGE $range $query_vector]=>"

"{$YIELD_DISTANCE_AS: vector_score}"

)

.sort_by("vector_score")

.return_fields("vector_score", "id", "brand", "model", "description")

.paging(0, 4)

.dialect(2)

)

table = create_query_table(

range_query, queries[:1],

encoded_queries[:1],

{"range": 0.55}

)

print(table)

# >>> | Bike for small kids | 0.52 | bikes:001 | Velorim |...

2. Store the demo data in Redis

Now iterate over the bikes array to store the data as JSON documents in Redis by using the JSON.SET command. The below code uses a pipeline to minimize the network round-trip times:

"""

Code samples for vector database quickstart pages:

https://redis.io/docs/latest/develop/get-started/vector-database/

"""

import json

import time

import numpy as np

import pandas as pd

import requests

import redis

from redis.commands.search.field import (

NumericField,

TagField,

TextField,

VectorField,

)

from redis.commands.search.index_definition import IndexDefinition, IndexType

from redis.commands.search.query import Query

from sentence_transformers import SentenceTransformer

URL = ("https://raw.githubusercontent.com/bsbodden/redis_vss_getting_started"

"/main/data/bikes.json"

)

response = requests.get(URL, timeout=10)

bikes = response.json()

json.dumps(bikes[0], indent=2)

client = redis.Redis(host="localhost", port=6379, decode_responses=True)

res = client.ping()

# >>> True

pipeline = client.pipeline()

for i, bike in enumerate(bikes, start=1):

redis_key = f"bikes:{i:03}"

pipeline.json().set(redis_key, "$", bike)

res = pipeline.execute()

# >>> [True, True, True, True, True, True, True, True, True, True, True]

res = client.json().get("bikes:010", "$.model")

# >>> ['Summit']

keys = sorted(client.keys("bikes:*"))

# >>> ['bikes:001', 'bikes:002', ..., 'bikes:011']

descriptions = client.json().mget(keys, "$.description")

descriptions = [item for sublist in descriptions for item in sublist]

embedder = SentenceTransformer("msmarco-distilbert-base-v4")

embeddings = embedder.encode(descriptions).astype(np.float32).tolist()

VECTOR_DIMENSION = len(embeddings[0])

# >>> 768

pipeline = client.pipeline()

for key, embedding in zip(keys, embeddings):

pipeline.json().set(key, "$.description_embeddings", embedding)

pipeline.execute()

# >>> [True, True, True, True, True, True, True, True, True, True, True]

res = client.json().get("bikes:010")

# >>>

# {

# "model": "Summit",

# "brand": "nHill",

# "price": 1200,

# "type": "Mountain Bike",

# "specs": {

# "material": "alloy",

# "weight": "11.3"

# },

# "description": "This budget mountain bike from nHill performs well..."

# "description_embeddings": [

# -0.538114607334137,

# -0.49465855956077576,

# -0.025176964700222015,

# ...

# ]

# }

schema = (

TextField("$.model", no_stem=True, as_name="model"),

TextField("$.brand", no_stem=True, as_name="brand"),

NumericField("$.price", as_name="price"),

TagField("$.type", as_name="type"),

TextField("$.description", as_name="description"),

VectorField(

"$.description_embeddings",

"FLAT",

{

"TYPE": "FLOAT32",

"DIM": VECTOR_DIMENSION,

"DISTANCE_METRIC": "COSINE",

},

as_name="vector",

),

)

definition = IndexDefinition(prefix=["bikes:"], index_type=IndexType.JSON)

res = client.ft("idx:bikes_vss").create_index(fields=schema, definition=definition)

# >>> 'OK'

info = client.ft("idx:bikes_vss").info()

num_docs = info["num_docs"]

indexing_failures = info["hash_indexing_failures"]

# print(f"{num_docs} documents indexed with {indexing_failures} failures")

# >>> 11 documents indexed with 0 failures

query = Query("@brand:Peaknetic")

res = client.ft("idx:bikes_vss").search(query).docs

# print(res)

# >>> [

# Document {

# 'id': 'bikes:008',

# 'payload': None,

# 'brand': 'Peaknetic',

# 'model': 'Soothe Electric bike',

# 'price': '1950', 'description_embeddings': ...

query = Query("@brand:Peaknetic").return_fields("id", "brand", "model", "price")

res = client.ft("idx:bikes_vss").search(query).docs

# print(res)

# >>> [

# Document {

# 'id': 'bikes:008',

# 'payload': None,

# 'brand': 'Peaknetic',

# 'model': 'Soothe Electric bike',

# 'price': '1950'

# },

# Document {

# 'id': 'bikes:009',

# 'payload': None,

# 'brand': 'Peaknetic',

# 'model': 'Secto',

# 'price': '430'

# }

# ]

query = Query("@brand:Peaknetic @price:[0 1000]").return_fields(

"id", "brand", "model", "price"

)

res = client.ft("idx:bikes_vss").search(query).docs

# print(res)

# >>> [

# Document {

# 'id': 'bikes:009',

# 'payload': None,

# 'brand': 'Peaknetic',

# 'model': 'Secto',

# 'price': '430'

# }

# ]

queries = [

"Bike for small kids",

"Best Mountain bikes for kids",

"Cheap Mountain bike for kids",

"Female specific mountain bike",

"Road bike for beginners",

"Commuter bike for people over 60",

"Comfortable commuter bike",

"Good bike for college students",

"Mountain bike for beginners",

"Vintage bike",

"Comfortable city bike",

]

encoded_queries = embedder.encode(queries)

len(encoded_queries)

# >>> 11

def create_query_table(query, queries, encoded_queries, extra_params=None):

"""

Creates a query table.

"""

results_list = []

for i, encoded_query in enumerate(encoded_queries):

result_docs = (

client.ft("idx:bikes_vss")

.search(

query,

{"query_vector": np.array(encoded_query, dtype=np.float32).tobytes()}

| (extra_params if extra_params else {}),

)

.docs

)

for doc in result_docs:

vector_score = round(1 - float(doc.vector_score), 2)

results_list.append(

{

"query": queries[i],

"score": vector_score,

"id": doc.id,

"brand": doc.brand,

"model": doc.model,

"description": doc.description,

}

)

# Optional: convert the table to Markdown using Pandas

queries_table = pd.DataFrame(results_list)

queries_table.sort_values(

by=["query", "score"], ascending=[True, False], inplace=True

)

queries_table["query"] = queries_table.groupby("query")["query"].transform(

lambda x: [x.iloc[0]] + [""] * (len(x) - 1)

)

queries_table["description"] = queries_table["description"].apply(

lambda x: (x[:497] + "...") if len(x) > 500 else x

)

return queries_table.to_markdown(index=False)

query = (

Query("(*)=>[KNN 3 @vector $query_vector AS vector_score]")

.sort_by("vector_score")

.return_fields("vector_score", "id", "brand", "model", "description")

.dialect(2)

)

table = create_query_table(query, queries, encoded_queries)

print(table)

# >>> | Best Mountain bikes for kids | 0.54 | bikes:003...

hybrid_query = (

Query("(@brand:Peaknetic)=>[KNN 3 @vector $query_vector AS vector_score]")

.sort_by("vector_score")

.return_fields("vector_score", "id", "brand", "model", "description")

.dialect(2)

)

table = create_query_table(hybrid_query, queries, encoded_queries)

print(table)

# >>> | Best Mountain bikes for kids | 0.3 | bikes:008...

range_query = (

Query(

"@vector:[VECTOR_RANGE $range $query_vector]=>"

"{$YIELD_DISTANCE_AS: vector_score}"

)

.sort_by("vector_score")

.return_fields("vector_score", "id", "brand", "model", "description")

.paging(0, 4)

.dialect(2)

)

table = create_query_table(

range_query, queries[:1],

encoded_queries[:1],

{"range": 0.55}

)

print(table)

# >>> | Bike for small kids | 0.52 | bikes:001 | Velorim |...

Once loaded, you can retrieve a specific attribute from one of the JSON documents in Redis using a JSONPath expression:

"""

Code samples for vector database quickstart pages:

https://redis.io/docs/latest/develop/get-started/vector-database/

"""

import json

import time

import numpy as np

import pandas as pd

import requests

import redis

from redis.commands.search.field import (

NumericField,

TagField,

TextField,

VectorField,

)

from redis.commands.search.index_definition import IndexDefinition, IndexType

from redis.commands.search.query import Query

from sentence_transformers import SentenceTransformer

URL = ("https://raw.githubusercontent.com/bsbodden/redis_vss_getting_started"

"/main/data/bikes.json"

)

response = requests.get(URL, timeout=10)

bikes = response.json()

json.dumps(bikes[0], indent=2)

client = redis.Redis(host="localhost", port=6379, decode_responses=True)

res = client.ping()

# >>> True

pipeline = client.pipeline()

for i, bike in enumerate(bikes, start=1):

redis_key = f"bikes:{i:03}"

pipeline.json().set(redis_key, "$", bike)

res = pipeline.execute()

# >>> [True, True, True, True, True, True, True, True, True, True, True]

res = client.json().get("bikes:010", "$.model")

# >>> ['Summit']

keys = sorted(client.keys("bikes:*"))

# >>> ['bikes:001', 'bikes:002', ..., 'bikes:011']

descriptions = client.json().mget(keys, "$.description")

descriptions = [item for sublist in descriptions for item in sublist]

embedder = SentenceTransformer("msmarco-distilbert-base-v4")

embeddings = embedder.encode(descriptions).astype(np.float32).tolist()

VECTOR_DIMENSION = len(embeddings[0])

# >>> 768

pipeline = client.pipeline()

for key, embedding in zip(keys, embeddings):

pipeline.json().set(key, "$.description_embeddings", embedding)

pipeline.execute()

# >>> [True, True, True, True, True, True, True, True, True, True, True]

res = client.json().get("bikes:010")

# >>>

# {

# "model": "Summit",

# "brand": "nHill",

# "price": 1200,

# "type": "Mountain Bike",

# "specs": {

# "material": "alloy",

# "weight": "11.3"

# },

# "description": "This budget mountain bike from nHill performs well..."

# "description_embeddings": [

# -0.538114607334137,

# -0.49465855956077576,

# -0.025176964700222015,

# ...

# ]

# }

schema = (

TextField("$.model", no_stem=True, as_name="model"),

TextField("$.brand", no_stem=True, as_name="brand"),

NumericField("$.price", as_name="price"),

TagField("$.type", as_name="type"),

TextField("$.description", as_name="description"),

VectorField(

"$.description_embeddings",

"FLAT",

{

"TYPE": "FLOAT32",

"DIM": VECTOR_DIMENSION,

"DISTANCE_METRIC": "COSINE",

},

as_name="vector",

),

)

definition = IndexDefinition(prefix=["bikes:"], index_type=IndexType.JSON)

res = client.ft("idx:bikes_vss").create_index(fields=schema, definition=definition)

# >>> 'OK'

info = client.ft("idx:bikes_vss").info()

num_docs = info["num_docs"]

indexing_failures = info["hash_indexing_failures"]

# print(f"{num_docs} documents indexed with {indexing_failures} failures")

# >>> 11 documents indexed with 0 failures

query = Query("@brand:Peaknetic")

res = client.ft("idx:bikes_vss").search(query).docs

# print(res)

# >>> [

# Document {

# 'id': 'bikes:008',

# 'payload': None,

# 'brand': 'Peaknetic',

# 'model': 'Soothe Electric bike',

# 'price': '1950', 'description_embeddings': ...

query = Query("@brand:Peaknetic").return_fields("id", "brand", "model", "price")

res = client.ft("idx:bikes_vss").search(query).docs

# print(res)

# >>> [

# Document {

# 'id': 'bikes:008',

# 'payload': None,

# 'brand': 'Peaknetic',

# 'model': 'Soothe Electric bike',

# 'price': '1950'

# },

# Document {

# 'id': 'bikes:009',

# 'payload': None,

# 'brand': 'Peaknetic',

# 'model': 'Secto',

# 'price': '430'

# }

# ]

query = Query("@brand:Peaknetic @price:[0 1000]").return_fields(

"id", "brand", "model", "price"

)

res = client.ft("idx:bikes_vss").search(query).docs

# print(res)

# >>> [

# Document {

# 'id': 'bikes:009',

# 'payload': None,

# 'brand': 'Peaknetic',

# 'model': 'Secto',

# 'price': '430'

# }

# ]

queries = [

"Bike for small kids",

"Best Mountain bikes for kids",

"Cheap Mountain bike for kids",

"Female specific mountain bike",

"Road bike for beginners",

"Commuter bike for people over 60",

"Comfortable commuter bike",

"Good bike for college students",

"Mountain bike for beginners",

"Vintage bike",

"Comfortable city bike",

]

encoded_queries = embedder.encode(queries)

len(encoded_queries)

# >>> 11

def create_query_table(query, queries, encoded_queries, extra_params=None):

"""

Creates a query table.

"""

results_list = []

for i, encoded_query in enumerate(encoded_queries):

result_docs = (

client.ft("idx:bikes_vss")

.search(

query,

{"query_vector": np.array(encoded_query, dtype=np.float32).tobytes()}

| (extra_params if extra_params else {}),

)

.docs

)

for doc in result_docs:

vector_score = round(1 - float(doc.vector_score), 2)

results_list.append(

{

"query": queries[i],

"score": vector_score,

"id": doc.id,

"brand": doc.brand,

"model": doc.model,

"description": doc.description,

}

)

# Optional: convert the table to Markdown using Pandas

queries_table = pd.DataFrame(results_list)

queries_table.sort_values(

by=["query", "score"], ascending=[True, False], inplace=True

)

queries_table["query"] = queries_table.groupby("query")["query"].transform(

lambda x: [x.iloc[0]] + [""] * (len(x) - 1)

)

queries_table["description"] = queries_table["description"].apply(

lambda x: (x[:497] + "...") if len(x) > 500 else x

)

return queries_table.to_markdown(index=False)

query = (

Query("(*)=>[KNN 3 @vector $query_vector AS vector_score]")

.sort_by("vector_score")

.return_fields("vector_score", "id", "brand", "model", "description")

.dialect(2)

)

table = create_query_table(query, queries, encoded_queries)

print(table)

# >>> | Best Mountain bikes for kids | 0.54 | bikes:003...

hybrid_query = (

Query("(@brand:Peaknetic)=>[KNN 3 @vector $query_vector AS vector_score]")

.sort_by("vector_score")

.return_fields("vector_score", "id", "brand", "model", "description")

.dialect(2)

)

table = create_query_table(hybrid_query, queries, encoded_queries)

print(table)

# >>> | Best Mountain bikes for kids | 0.3 | bikes:008...

range_query = (

Query(

"@vector:[VECTOR_RANGE $range $query_vector]=>"

"{$YIELD_DISTANCE_AS: vector_score}"

)

.sort_by("vector_score")

.return_fields("vector_score", "id", "brand", "model", "description")

.paging(0, 4)

.dialect(2)

)

table = create_query_table(

range_query, queries[:1],

encoded_queries[:1],

{"range": 0.55}

)

print(table)

# >>> | Bike for small kids | 0.52 | bikes:001 | Velorim |...

3. Select a text embedding model

HuggingFace has a large catalog of text embedding models that are locally servable through the SentenceTransformers framework. Here we use the MS MARCO model that is widely used in search engines, chatbots, and other AI applications.

from sentence_transformers import SentenceTransformer

embedder = SentenceTransformer('msmarco-distilbert-base-v4')

4. Generate text embeddings

Iterate over all the Redis keys with the prefix bikes::

"""

Code samples for vector database quickstart pages:

https://redis.io/docs/latest/develop/get-started/vector-database/

"""

import json

import time

import numpy as np

import pandas as pd

import requests

import redis

from redis.commands.search.field import (

NumericField,

TagField,

TextField,

VectorField,

)

from redis.commands.search.index_definition import IndexDefinition, IndexType

from redis.commands.search.query import Query

from sentence_transformers import SentenceTransformer

URL = ("https://raw.githubusercontent.com/bsbodden/redis_vss_getting_started"

"/main/data/bikes.json"

)

response = requests.get(URL, timeout=10)

bikes = response.json()

json.dumps(bikes[0], indent=2)

client = redis.Redis(host="localhost", port=6379, decode_responses=True)

res = client.ping()

# >>> True

pipeline = client.pipeline()

for i, bike in enumerate(bikes, start=1):

redis_key = f"bikes:{i:03}"

pipeline.json().set(redis_key, "$", bike)

res = pipeline.execute()

# >>> [True, True, True, True, True, True, True, True, True, True, True]

res = client.json().get("bikes:010", "$.model")

# >>> ['Summit']

keys = sorted(client.keys("bikes:*"))

# >>> ['bikes:001', 'bikes:002', ..., 'bikes:011']

descriptions = client.json().mget(keys, "$.description")

descriptions = [item for sublist in descriptions for item in sublist]

embedder = SentenceTransformer("msmarco-distilbert-base-v4")

embeddings = embedder.encode(descriptions).astype(np.float32).tolist()

VECTOR_DIMENSION = len(embeddings[0])

# >>> 768

pipeline = client.pipeline()

for key, embedding in zip(keys, embeddings):

pipeline.json().set(key, "$.description_embeddings", embedding)

pipeline.execute()

# >>> [True, True, True, True, True, True, True, True, True, True, True]

res = client.json().get("bikes:010")

# >>>

# {

# "model": "Summit",

# "brand": "nHill",

# "price": 1200,

# "type": "Mountain Bike",

# "specs": {

# "material": "alloy",

# "weight": "11.3"

# },

# "description": "This budget mountain bike from nHill performs well..."

# "description_embeddings": [

# -0.538114607334137,

# -0.49465855956077576,

# -0.025176964700222015,

# ...

# ]

# }

schema = (

TextField("$.model", no_stem=True, as_name="model"),

TextField("$.brand", no_stem=True, as_name="brand"),

NumericField("$.price", as_name="price"),

TagField("$.type", as_name="type"),

TextField("$.description", as_name="description"),

VectorField(

"$.description_embeddings",

"FLAT",

{

"TYPE": "FLOAT32",

"DIM": VECTOR_DIMENSION,

"DISTANCE_METRIC": "COSINE",

},

as_name="vector",

),

)

definition = IndexDefinition(prefix=["bikes:"], index_type=IndexType.JSON)

res = client.ft("idx:bikes_vss").create_index(fields=schema, definition=definition)

# >>> 'OK'

info = client.ft("idx:bikes_vss").info()

num_docs = info["num_docs"]

indexing_failures = info["hash_indexing_failures"]

# print(f"{num_docs} documents indexed with {indexing_failures} failures")

# >>> 11 documents indexed with 0 failures

query = Query("@brand:Peaknetic")

res = client.ft("idx:bikes_vss").search(query).docs

# print(res)

# >>> [

# Document {

# 'id': 'bikes:008',

# 'payload': None,

# 'brand': 'Peaknetic',

# 'model': 'Soothe Electric bike',

# 'price': '1950', 'description_embeddings': ...

query = Query("@brand:Peaknetic").return_fields("id", "brand", "model", "price")

res = client.ft("idx:bikes_vss").search(query).docs

# print(res)

# >>> [

# Document {

# 'id': 'bikes:008',

# 'payload': None,

# 'brand': 'Peaknetic',

# 'model': 'Soothe Electric bike',

# 'price': '1950'

# },

# Document {

# 'id': 'bikes:009',

# 'payload': None,

# 'brand': 'Peaknetic',

# 'model': 'Secto',

# 'price': '430'

# }

# ]

query = Query("@brand:Peaknetic @price:[0 1000]").return_fields(

"id", "brand", "model", "price"

)

res = client.ft("idx:bikes_vss").search(query).docs

# print(res)

# >>> [

# Document {

# 'id': 'bikes:009',

# 'payload': None,

# 'brand': 'Peaknetic',

# 'model': 'Secto',

# 'price': '430'

# }

# ]

queries = [

"Bike for small kids",

"Best Mountain bikes for kids",

"Cheap Mountain bike for kids",

"Female specific mountain bike",

"Road bike for beginners",

"Commuter bike for people over 60",

"Comfortable commuter bike",

"Good bike for college students",

"Mountain bike for beginners",

"Vintage bike",

"Comfortable city bike",

]

encoded_queries = embedder.encode(queries)

len(encoded_queries)

# >>> 11

def create_query_table(query, queries, encoded_queries, extra_params=None):

"""

Creates a query table.

"""

results_list = []

for i, encoded_query in enumerate(encoded_queries):

result_docs = (

client.ft("idx:bikes_vss")

.search(

query,

{"query_vector": np.array(encoded_query, dtype=np.float32).tobytes()}

| (extra_params if extra_params else {}),

)

.docs

)

for doc in result_docs:

vector_score = round(1 - float(doc.vector_score), 2)

results_list.append(

{

"query": queries[i],

"score": vector_score,

"id": doc.id,

"brand": doc.brand,

"model": doc.model,

"description": doc.description,

}

)

# Optional: convert the table to Markdown using Pandas

queries_table = pd.DataFrame(results_list)

queries_table.sort_values(

by=["query", "score"], ascending=[True, False], inplace=True

)

queries_table["query"] = queries_table.groupby("query")["query"].transform(

lambda x: [x.iloc[0]] + [""] * (len(x) - 1)

)

queries_table["description"] = queries_table["description"].apply(

lambda x: (x[:497] + "...") if len(x) > 500 else x

)

return queries_table.to_markdown(index=False)

query = (

Query("(*)=>[KNN 3 @vector $query_vector AS vector_score]")

.sort_by("vector_score")

.return_fields("vector_score", "id", "brand", "model", "description")

.dialect(2)

)

table = create_query_table(query, queries, encoded_queries)

print(table)

# >>> | Best Mountain bikes for kids | 0.54 | bikes:003...

hybrid_query = (

Query("(@brand:Peaknetic)=>[KNN 3 @vector $query_vector AS vector_score]")

.sort_by("vector_score")

.return_fields("vector_score", "id", "brand", "model", "description")

.dialect(2)

)

table = create_query_table(hybrid_query, queries, encoded_queries)

print(table)

# >>> | Best Mountain bikes for kids | 0.3 | bikes:008...

range_query = (

Query(

"@vector:[VECTOR_RANGE $range $query_vector]=>"

"{$YIELD_DISTANCE_AS: vector_score}"

)

.sort_by("vector_score")

.return_fields("vector_score", "id", "brand", "model", "description")

.paging(0, 4)

.dialect(2)

)

table = create_query_table(

range_query, queries[:1],

encoded_queries[:1],

{"range": 0.55}

)

print(table)

# >>> | Bike for small kids | 0.52 | bikes:001 | Velorim |...

Use the keys as input to the JSON.MGET command, along with the $.description field, to collect the descriptions in a list. Then, pass the list of descriptions to the .encode() method:

"""

Code samples for vector database quickstart pages:

https://redis.io/docs/latest/develop/get-started/vector-database/

"""

import json

import time

import numpy as np

import pandas as pd

import requests

import redis

from redis.commands.search.field import (

NumericField,

TagField,

TextField,

VectorField,

)

from redis.commands.search.index_definition import IndexDefinition, IndexType

from redis.commands.search.query import Query

from sentence_transformers import SentenceTransformer

URL = ("https://raw.githubusercontent.com/bsbodden/redis_vss_getting_started"

"/main/data/bikes.json"

)

response = requests.get(URL, timeout=10)

bikes = response.json()

json.dumps(bikes[0], indent=2)

client = redis.Redis(host="localhost", port=6379, decode_responses=True)

res = client.ping()

# >>> True

pipeline = client.pipeline()

for i, bike in enumerate(bikes, start=1):

redis_key = f"bikes:{i:03}"

pipeline.json().set(redis_key, "$", bike)

res = pipeline.execute()

# >>> [True, True, True, True, True, True, True, True, True, True, True]

res = client.json().get("bikes:010", "$.model")

# >>> ['Summit']

keys = sorted(client.keys("bikes:*"))

# >>> ['bikes:001', 'bikes:002', ..., 'bikes:011']

descriptions = client.json().mget(keys, "$.description")

descriptions = [item for sublist in descriptions for item in sublist]

embedder = SentenceTransformer("msmarco-distilbert-base-v4")

embeddings = embedder.encode(descriptions).astype(np.float32).tolist()

VECTOR_DIMENSION = len(embeddings[0])

# >>> 768

pipeline = client.pipeline()

for key, embedding in zip(keys, embeddings):

pipeline.json().set(key, "$.description_embeddings", embedding)

pipeline.execute()

# >>> [True, True, True, True, True, True, True, True, True, True, True]

res = client.json().get("bikes:010")

# >>>

# {

# "model": "Summit",

# "brand": "nHill",

# "price": 1200,

# "type": "Mountain Bike",

# "specs": {

# "material": "alloy",

# "weight": "11.3"

# },

# "description": "This budget mountain bike from nHill performs well..."

# "description_embeddings": [

# -0.538114607334137,

# -0.49465855956077576,

# -0.025176964700222015,

# ...

# ]

# }

schema = (

TextField("$.model", no_stem=True, as_name="model"),

TextField("$.brand", no_stem=True, as_name="brand"),

NumericField("$.price", as_name="price"),

TagField("$.type", as_name="type"),

TextField("$.description", as_name="description"),

VectorField(

"$.description_embeddings",

"FLAT",

{

"TYPE": "FLOAT32",

"DIM": VECTOR_DIMENSION,

"DISTANCE_METRIC": "COSINE",

},

as_name="vector",

),

)

definition = IndexDefinition(prefix=["bikes:"], index_type=IndexType.JSON)

res = client.ft("idx:bikes_vss").create_index(fields=schema, definition=definition)

# >>> 'OK'

info = client.ft("idx:bikes_vss").info()

num_docs = info["num_docs"]

indexing_failures = info["hash_indexing_failures"]

# print(f"{num_docs} documents indexed with {indexing_failures} failures")

# >>> 11 documents indexed with 0 failures

query = Query("@brand:Peaknetic")

res = client.ft("idx:bikes_vss").search(query).docs

# print(res)

# >>> [

# Document {

# 'id': 'bikes:008',

# 'payload': None,

# 'brand': 'Peaknetic',

# 'model': 'Soothe Electric bike',

# 'price': '1950', 'description_embeddings': ...

query = Query("@brand:Peaknetic").return_fields("id", "brand", "model", "price")

res = client.ft("idx:bikes_vss").search(query).docs

# print(res)

# >>> [

# Document {

# 'id': 'bikes:008',

# 'payload': None,

# 'brand': 'Peaknetic',

# 'model': 'Soothe Electric bike',

# 'price': '1950'

# },

# Document {

# 'id': 'bikes:009',

# 'payload': None,

# 'brand': 'Peaknetic',

# 'model': 'Secto',

# 'price': '430'

# }

# ]

query = Query("@brand:Peaknetic @price:[0 1000]").return_fields(

"id", "brand", "model", "price"

)

res = client.ft("idx:bikes_vss").search(query).docs

# print(res)

# >>> [

# Document {

# 'id': 'bikes:009',

# 'payload': None,

# 'brand': 'Peaknetic',

# 'model': 'Secto',

# 'price': '430'

# }

# ]

queries = [

"Bike for small kids",

"Best Mountain bikes for kids",

"Cheap Mountain bike for kids",

"Female specific mountain bike",

"Road bike for beginners",

"Commuter bike for people over 60",

"Comfortable commuter bike",

"Good bike for college students",

"Mountain bike for beginners",

"Vintage bike",

"Comfortable city bike",

]

encoded_queries = embedder.encode(queries)

len(encoded_queries)

# >>> 11

def create_query_table(query, queries, encoded_queries, extra_params=None):

"""

Creates a query table.

"""

results_list = []

for i, encoded_query in enumerate(encoded_queries):

result_docs = (

client.ft("idx:bikes_vss")

.search(

query,

{"query_vector": np.array(encoded_query, dtype=np.float32).tobytes()}

| (extra_params if extra_params else {}),

)

.docs

)

for doc in result_docs:

vector_score = round(1 - float(doc.vector_score), 2)

results_list.append(

{

"query": queries[i],

"score": vector_score,

"id": doc.id,

"brand": doc.brand,

"model": doc.model,

"description": doc.description,

}

)

# Optional: convert the table to Markdown using Pandas

queries_table = pd.DataFrame(results_list)

queries_table.sort_values(

by=["query", "score"], ascending=[True, False], inplace=True

)

queries_table["query"] = queries_table.groupby("query")["query"].transform(

lambda x: [x.iloc[0]] + [""] * (len(x) - 1)

)

queries_table["description"] = queries_table["description"].apply(

lambda x: (x[:497] + "...") if len(x) > 500 else x

)

return queries_table.to_markdown(index=False)

query = (

Query("(*)=>[KNN 3 @vector $query_vector AS vector_score]")

.sort_by("vector_score")

.return_fields("vector_score", "id", "brand", "model", "description")

.dialect(2)

)

table = create_query_table(query, queries, encoded_queries)

print(table)

# >>> | Best Mountain bikes for kids | 0.54 | bikes:003...

hybrid_query = (

Query("(@brand:Peaknetic)=>[KNN 3 @vector $query_vector AS vector_score]")

.sort_by("vector_score")

.return_fields("vector_score", "id", "brand", "model", "description")

.dialect(2)

)

table = create_query_table(hybrid_query, queries, encoded_queries)

print(table)

# >>> | Best Mountain bikes for kids | 0.3 | bikes:008...

range_query = (

Query(

"@vector:[VECTOR_RANGE $range $query_vector]=>"

"{$YIELD_DISTANCE_AS: vector_score}"

)

.sort_by("vector_score")

.return_fields("vector_score", "id", "brand", "model", "description")

.paging(0, 4)

.dialect(2)

)

table = create_query_table(

range_query, queries[:1],

encoded_queries[:1],

{"range": 0.55}

)

print(table)

# >>> | Bike for small kids | 0.52 | bikes:001 | Velorim |...

Insert the vectorized descriptions to the bike documents in Redis using the JSON.SET command. The following command inserts a new field into each of the documents under the JSONPath $.description_embeddings. Once again, do this using a pipeline to avoid unnecessary network round-trips:

"""

Code samples for vector database quickstart pages:

https://redis.io/docs/latest/develop/get-started/vector-database/

"""

import json

import time

import numpy as np

import pandas as pd

import requests

import redis

from redis.commands.search.field import (

NumericField,

TagField,

TextField,

VectorField,

)

from redis.commands.search.index_definition import IndexDefinition, IndexType

from redis.commands.search.query import Query

from sentence_transformers import SentenceTransformer

URL = ("https://raw.githubusercontent.com/bsbodden/redis_vss_getting_started"

"/main/data/bikes.json"

)

response = requests.get(URL, timeout=10)

bikes = response.json()

json.dumps(bikes[0], indent=2)

client = redis.Redis(host="localhost", port=6379, decode_responses=True)

res = client.ping()

# >>> True

pipeline = client.pipeline()

for i, bike in enumerate(bikes, start=1):

redis_key = f"bikes:{i:03}"

pipeline.json().set(redis_key, "$", bike)

res = pipeline.execute()

# >>> [True, True, True, True, True, True, True, True, True, True, True]

res = client.json().get("bikes:010", "$.model")

# >>> ['Summit']

keys = sorted(client.keys("bikes:*"))

# >>> ['bikes:001', 'bikes:002', ..., 'bikes:011']

descriptions = client.json().mget(keys, "$.description")

descriptions = [item for sublist in descriptions for item in sublist]

embedder = SentenceTransformer("msmarco-distilbert-base-v4")

embeddings = embedder.encode(descriptions).astype(np.float32).tolist()

VECTOR_DIMENSION = len(embeddings[0])

# >>> 768

pipeline = client.pipeline()

for key, embedding in zip(keys, embeddings):

pipeline.json().set(key, "$.description_embeddings", embedding)

pipeline.execute()

# >>> [True, True, True, True, True, True, True, True, True, True, True]

res = client.json().get("bikes:010")

# >>>

# {

# "model": "Summit",

# "brand": "nHill",

# "price": 1200,

# "type": "Mountain Bike",

# "specs": {

# "material": "alloy",

# "weight": "11.3"

# },

# "description": "This budget mountain bike from nHill performs well..."

# "description_embeddings": [

# -0.538114607334137,

# -0.49465855956077576,

# -0.025176964700222015,

# ...

# ]

# }

schema = (

TextField("$.model", no_stem=True, as_name="model"),

TextField("$.brand", no_stem=True, as_name="brand"),

NumericField("$.price", as_name="price"),

TagField("$.type", as_name="type"),

TextField("$.description", as_name="description"),

VectorField(

"$.description_embeddings",

"FLAT",

{

"TYPE": "FLOAT32",

"DIM": VECTOR_DIMENSION,

"DISTANCE_METRIC": "COSINE",

},

as_name="vector",

),

)

definition = IndexDefinition(prefix=["bikes:"], index_type=IndexType.JSON)

res = client.ft("idx:bikes_vss").create_index(fields=schema, definition=definition)

# >>> 'OK'

info = client.ft("idx:bikes_vss").info()

num_docs = info["num_docs"]

indexing_failures = info["hash_indexing_failures"]

# print(f"{num_docs} documents indexed with {indexing_failures} failures")

# >>> 11 documents indexed with 0 failures

query = Query("@brand:Peaknetic")

res = client.ft("idx:bikes_vss").search(query).docs

# print(res)

# >>> [

# Document {

# 'id': 'bikes:008',

# 'payload': None,

# 'brand': 'Peaknetic',

# 'model': 'Soothe Electric bike',

# 'price': '1950', 'description_embeddings': ...

query = Query("@brand:Peaknetic").return_fields("id", "brand", "model", "price")

res = client.ft("idx:bikes_vss").search(query).docs

# print(res)

# >>> [

# Document {

# 'id': 'bikes:008',

# 'payload': None,

# 'brand': 'Peaknetic',

# 'model': 'Soothe Electric bike',

# 'price': '1950'

# },

# Document {

# 'id': 'bikes:009',

# 'payload': None,

# 'brand': 'Peaknetic',

# 'model': 'Secto',

# 'price': '430'

# }

# ]

query = Query("@brand:Peaknetic @price:[0 1000]").return_fields(

"id", "brand", "model", "price"

)

res = client.ft("idx:bikes_vss").search(query).docs

# print(res)

# >>> [

# Document {

# 'id': 'bikes:009',

# 'payload': None,

# 'brand': 'Peaknetic',

# 'model': 'Secto',

# 'price': '430'

# }

# ]

queries = [

"Bike for small kids",

"Best Mountain bikes for kids",

"Cheap Mountain bike for kids",

"Female specific mountain bike",

"Road bike for beginners",

"Commuter bike for people over 60",

"Comfortable commuter bike",

"Good bike for college students",

"Mountain bike for beginners",

"Vintage bike",

"Comfortable city bike",

]

encoded_queries = embedder.encode(queries)

len(encoded_queries)

# >>> 11

def create_query_table(query, queries, encoded_queries, extra_params=None):

"""

Creates a query table.

"""

results_list = []

for i, encoded_query in enumerate(encoded_queries):

result_docs = (

client.ft("idx:bikes_vss")

.search(

query,

{"query_vector": np.array(encoded_query, dtype=np.float32).tobytes()}

| (extra_params if extra_params else {}),

)

.docs

)

for doc in result_docs:

vector_score = round(1 - float(doc.vector_score), 2)

results_list.append(

{

"query": queries[i],

"score": vector_score,

"id": doc.id,

"brand": doc.brand,

"model": doc.model,

"description": doc.description,

}

)

# Optional: convert the table to Markdown using Pandas

queries_table = pd.DataFrame(results_list)

queries_table.sort_values(

by=["query", "score"], ascending=[True, False], inplace=True

)

queries_table["query"] = queries_table.groupby("query")["query"].transform(

lambda x: [x.iloc[0]] + [""] * (len(x) - 1)

)

queries_table["description"] = queries_table["description"].apply(

lambda x: (x[:497] + "...") if len(x) > 500 else x

)

return queries_table.to_markdown(index=False)

query = (

Query("(*)=>[KNN 3 @vector $query_vector AS vector_score]")

.sort_by("vector_score")

.return_fields("vector_score", "id", "brand", "model", "description")

.dialect(2)

)

table = create_query_table(query, queries, encoded_queries)

print(table)

# >>> | Best Mountain bikes for kids | 0.54 | bikes:003...

hybrid_query = (

Query("(@brand:Peaknetic)=>[KNN 3 @vector $query_vector AS vector_score]")

.sort_by("vector_score")

.return_fields("vector_score", "id", "brand", "model", "description")

.dialect(2)

)

table = create_query_table(hybrid_query, queries, encoded_queries)

print(table)

# >>> | Best Mountain bikes for kids | 0.3 | bikes:008...

range_query = (

Query(

"@vector:[VECTOR_RANGE $range $query_vector]=>"

"{$YIELD_DISTANCE_AS: vector_score}"

)

.sort_by("vector_score")

.return_fields("vector_score", "id", "brand", "model", "description")

.paging(0, 4)

.dialect(2)

)

table = create_query_table(

range_query, queries[:1],

encoded_queries[:1],

{"range": 0.55}

)

print(table)

# >>> | Bike for small kids | 0.52 | bikes:001 | Velorim |...

Inspect one of the updated bike documents using the JSON.GET command:

"""

Code samples for vector database quickstart pages:

https://redis.io/docs/latest/develop/get-started/vector-database/

"""

import json

import time

import numpy as np

import pandas as pd

import requests

import redis

from redis.commands.search.field import (

NumericField,

TagField,

TextField,

VectorField,

)

from redis.commands.search.index_definition import IndexDefinition, IndexType

from redis.commands.search.query import Query

from sentence_transformers import SentenceTransformer

URL = ("https://raw.githubusercontent.com/bsbodden/redis_vss_getting_started"

"/main/data/bikes.json"

)

response = requests.get(URL, timeout=10)

bikes = response.json()

json.dumps(bikes[0], indent=2)

client = redis.Redis(host="localhost", port=6379, decode_responses=True)

res = client.ping()

# >>> True

pipeline = client.pipeline()

for i, bike in enumerate(bikes, start=1):

redis_key = f"bikes:{i:03}"

pipeline.json().set(redis_key, "$", bike)

res = pipeline.execute()

# >>> [True, True, True, True, True, True, True, True, True, True, True]

res = client.json().get("bikes:010", "$.model")

# >>> ['Summit']

keys = sorted(client.keys("bikes:*"))

# >>> ['bikes:001', 'bikes:002', ..., 'bikes:011']

descriptions = client.json().mget(keys, "$.description")

descriptions = [item for sublist in descriptions for item in sublist]

embedder = SentenceTransformer("msmarco-distilbert-base-v4")

embeddings = embedder.encode(descriptions).astype(np.float32).tolist()

VECTOR_DIMENSION = len(embeddings[0])

# >>> 768

pipeline = client.pipeline()

for key, embedding in zip(keys, embeddings):

pipeline.json().set(key, "$.description_embeddings", embedding)

pipeline.execute()

# >>> [True, True, True, True, True, True, True, True, True, True, True]

res = client.json().get("bikes:010")

# >>>

# {

# "model": "Summit",

# "brand": "nHill",

# "price": 1200,

# "type": "Mountain Bike",

# "specs": {

# "material": "alloy",

# "weight": "11.3"

# },

# "description": "This budget mountain bike from nHill performs well..."

# "description_embeddings": [

# -0.538114607334137,

# -0.49465855956077576,

# -0.025176964700222015,

# ...

# ]

# }

schema = (

TextField("$.model", no_stem=True, as_name="model"),

TextField("$.brand", no_stem=True, as_name="brand"),

NumericField("$.price", as_name="price"),

TagField("$.type", as_name="type"),

TextField("$.description", as_name="description"),

VectorField(

"$.description_embeddings",

"FLAT",

{

"TYPE": "FLOAT32",

"DIM": VECTOR_DIMENSION,

"DISTANCE_METRIC": "COSINE",

},

as_name="vector",

),

)

definition = IndexDefinition(prefix=["bikes:"], index_type=IndexType.JSON)

res = client.ft("idx:bikes_vss").create_index(fields=schema, definition=definition)